In our most recent post we discussed the current set of experiments that we are conducting, using the MNIST dataset. We've also been looking at the NIST dataset which is similar, but extends to handwritten letters (as well as digits).

These are extremely popular datasets and freely available, so make a great choice for testing and comparing an algorithm with the benchmarks.

The MNIST data is not available directly as images though. Even though it's a standard format, it's not common. It's easy to find snippets of code to convert this format into standard images (such as PNG or JPG), but putting it together and getting it working is not where you want to spend your time - instead of designing and running your experiment!

We've been through that phase, so very happy to open source our code to make it easier for others to get going faster.

These are simple, small, self contained Java projects with ZERO dependencies. There are two projects, one for preprocessing MNIST files into images, the other is for NIST images, to make them equivalent to the MNIST images to be used in the same experimental setup easily. See the README for more information about the a steps taken.

Preprocess-MNIST

Preprocess_NIST_SD19

Friday, 28 April 2017

Tuesday, 20 September 2016

Region-Layer Experiments

Region-Layer component. The objective is to understand to what extent these ideas work, and to expose limitations both in implementation and theory.

Results will be posted to the blog and written up for publication if, or when, we reach an acceptable level of novelty and rigor.

We are not trying to beat benchmarks here. We’re trying to show whether certain ideas have useful qualities - the best way to tackle specific AI problems is almost certainly not an AGI way. But what we’d love to see is that AGI-inspired methods can perform close to state-of-the-art (e.g. deep convolutional networks) on a wide range of problems. Now that would be general intelligence!

The good thing about MNIST is that it’s simple and has been extremely widely studied. It’s easy to work with the data and the images are a practical size - big enough to be interesting, but not so big as to require lots of preprocessing or too much memory. Despite only 28x28 pixels, variations in digit appearance gives considerable depth to the data (example digit '5' above).

The bad thing about MNIST is that it’s largely “solved” by supervised learning algorithms. A range of different supervised techniques have reached human performance and it’s debatable whether any further improvements are genuine.

So what’s the point of trying new approaches? Well, supervised algorithms have some odd qualities, perhaps due to the narrowness of training samples or the specificity of the cost function. For example, the discovery of “adversarial examples” - images that look easily classifiable to the naked eye but cannot be classified correctly with a trained network because they exploit weaknesses in trained neural networks.

But the biggest drawback of supervised learning is the need to tell it the “correct” answer for every input. This has led to a range of techniques - such as transfer learning - to make the most of what training data is available, even if not directly relevant. But fundamentally, supervised learning is unlike the experience of an agent learning as it explores its world. Animals can learn without a tutor.

However, unsupervised results with MNIST are less widely reported. Partially this is because you need to come up with a way to measure the performance of an unsupervised method. The most common approach is to use unsupervised networks to boost the performance of a final supervised network layer - but in MNIST the supervised layer is so powerful it’s hard to distinguish the contribution of the unsupervised layers. Nevertheless, these experiments are encouraging because having a few unsupervised layers seems to improve overall performance, compared to all-supervised networks. In addition to the limited data problem with supervised learning, unsupervised learning actually seems to add something.

One possible method of capturing the contribution of unsupervised layers alone is the Rand Index, which measures the similarity between two clusters. However, we are intending to use a distributed representation where there will be overlap between similar representations - that’s one of the features of the algorithm!

So, for now we’re going to go for the simplest approach we can think of, and measure the correlation between the active cells in selected hidden layers and each digit label, and see if the correlation alone is enough to pick the right label given a set of active cells. If the concepts defined by the digits exist somewhere in the hierarchy, they should be detectable as features uniquely correlated with specific labels...

Note also that we’re not doing any preprocessing of the MNIST images except binarization at threshold 0.5. Since the MNIST dataset is very high contrast, hopefully the threshold doesn’t matter much: It’s almost binary already.

See here and here to understand the classification role, and here for more information about prediction. Taken together, the ability to classify and predict future classifications allows sequences of input to be learned. This is a topic we have looked at in detail in earlier blog posts and we have some fairly effective techniques at our disposal.

We completed the following tests:

After completion of the unit tests we were satisfied that our Region-Layer component has the ability to efficiently produce variable order models of observed sequences using unsupervised learning, assuming that the states can reliably be detected.

We decided to run the following experiments:

Present each digit in the training set once, in a random order. Train the internal weights of the algorithm. Repeated several times if necessary.

Present each digit in the training set once, in a random order. Accumulate the frequency with which each active cell is associated with each digit label. After all images have been seen, convert the observed frequencies to correlations.

Present each digit in the testing set once, in a random order. Use the correlations between cell activity and training labels to predict the most likely digit label given the set of active cells in selected Region-Layer components (they are arranged into a hierarchy).

The motivation for this experiment is to see how the sequence learning can boost image recognition: Our Region-Layer component is supposed to be able to integrate both sequential and spatial information. This experiment actually has a lot of depth because English isn’t entirely predictable - if we use a different book for testing, there’ll be lots of sub-sequences the algorithm has never observed before. There’ll be uncertainty in image appearance and uncertainty in sequence, and we’d like to see how a hierarchy of Region-Layer components responds to both. Our expectation is that it will improve digit classification performance beyond the random image case.

In the next article, we will describe the specifics of the algorithms we implemented and tested on these problems.

A final article will present some results.

Results will be posted to the blog and written up for publication if, or when, we reach an acceptable level of novelty and rigor.

We are not trying to beat benchmarks here. We’re trying to show whether certain ideas have useful qualities - the best way to tackle specific AI problems is almost certainly not an AGI way. But what we’d love to see is that AGI-inspired methods can perform close to state-of-the-art (e.g. deep convolutional networks) on a wide range of problems. Now that would be general intelligence!

Dataset Choice

We are going to start with the MNIST digit classification dataset, and perform a number of experiments based on that. In future we will look at some more sophisticated image / object classification datasets such as LabelMe or Caltech_101.The good thing about MNIST is that it’s simple and has been extremely widely studied. It’s easy to work with the data and the images are a practical size - big enough to be interesting, but not so big as to require lots of preprocessing or too much memory. Despite only 28x28 pixels, variations in digit appearance gives considerable depth to the data (example digit '5' above).

The bad thing about MNIST is that it’s largely “solved” by supervised learning algorithms. A range of different supervised techniques have reached human performance and it’s debatable whether any further improvements are genuine.

So what’s the point of trying new approaches? Well, supervised algorithms have some odd qualities, perhaps due to the narrowness of training samples or the specificity of the cost function. For example, the discovery of “adversarial examples” - images that look easily classifiable to the naked eye but cannot be classified correctly with a trained network because they exploit weaknesses in trained neural networks.

But the biggest drawback of supervised learning is the need to tell it the “correct” answer for every input. This has led to a range of techniques - such as transfer learning - to make the most of what training data is available, even if not directly relevant. But fundamentally, supervised learning is unlike the experience of an agent learning as it explores its world. Animals can learn without a tutor.

However, unsupervised results with MNIST are less widely reported. Partially this is because you need to come up with a way to measure the performance of an unsupervised method. The most common approach is to use unsupervised networks to boost the performance of a final supervised network layer - but in MNIST the supervised layer is so powerful it’s hard to distinguish the contribution of the unsupervised layers. Nevertheless, these experiments are encouraging because having a few unsupervised layers seems to improve overall performance, compared to all-supervised networks. In addition to the limited data problem with supervised learning, unsupervised learning actually seems to add something.

One possible method of capturing the contribution of unsupervised layers alone is the Rand Index, which measures the similarity between two clusters. However, we are intending to use a distributed representation where there will be overlap between similar representations - that’s one of the features of the algorithm!

So, for now we’re going to go for the simplest approach we can think of, and measure the correlation between the active cells in selected hidden layers and each digit label, and see if the correlation alone is enough to pick the right label given a set of active cells. If the concepts defined by the digits exist somewhere in the hierarchy, they should be detectable as features uniquely correlated with specific labels...

Note also that we’re not doing any preprocessing of the MNIST images except binarization at threshold 0.5. Since the MNIST dataset is very high contrast, hopefully the threshold doesn’t matter much: It’s almost binary already.

Sequence Learning Tests

Before we start the experiments proper we conducted some ad-hoc tests to verify the features of the Region-Layer are implemented as intended. Remember, the Region-Layer has two key capabilities:- Classification … of the feedforward input, and

- Prediction … of future classification results (i.e. future internal states)

See here and here to understand the classification role, and here for more information about prediction. Taken together, the ability to classify and predict future classifications allows sequences of input to be learned. This is a topic we have looked at in detail in earlier blog posts and we have some fairly effective techniques at our disposal.

We completed the following tests:

- Cycle 0,1,2: We verified that the algorithm could predict the set of active cells in a short cycle of images. This ensures the sequence learning feature is working. The same image was used for each instance of a particular digit (i.e. there was no variation in digit appearance).

- Cycle 0,1,...,9: We tested a longer cycle. Again, the Region-Layer was able to predict the sequence perfectly.

- Cycle 0,1,2,3, 0,2,3,1: We tested an ambiguous cycle. At 0, it appears that the next state can be 1 or 2, and similarly, at 3, the next state can be 1 or 2. However, due to the variable order modelling behaviour of the Region-Layer, a single Region-Layer is able to predict this cycle perfectly. Note that first-order prediction cannot predict this sequence correctly.

- Cycle 0,1,2,3,1,2,4,0,2,3,1,2,1,5,0,3,2,1,4,5: We tested a complex graph of state sequences and again a single Region-Layer was able to predict the sequence perfectly. We also were able to predict this using only first order modelling and a deep hierarchy.

After completion of the unit tests we were satisfied that our Region-Layer component has the ability to efficiently produce variable order models of observed sequences using unsupervised learning, assuming that the states can reliably be detected.

Experiments

Now we come to the harder part. What if each digit exemplar image is ambiguous? In other words, what if each ‘0’ is represented by a randomly selected ‘0’ image from the MNIST dataset? The ambiguity of appearance means that the observed sequences will appear to be non-deterministic.We decided to run the following experiments:

Experiment 1: Random image classification

In this experiment there will be no predictable sequence; each digit must be recognized solely based on its appearance. The classic experiment is used: Up to N training passes over the entire MNIST dataset, followed by fixing the internal weights and a single pass to calculate the correlation between each active cell in selected hidden layer[s] and the digit labels. Then, a single pass over the test set recording, for each test input image, the most highly correlated digit label for each set of active hidden cells. The algorithm gets a “correct” result if the most correlated label is the correct label.- Passes 1-N: Train networks

Present each digit in the training set once, in a random order. Train the internal weights of the algorithm. Repeated several times if necessary.

- Pass N+1: Measure correlation of hidden layer features with training images.

Present each digit in the training set once, in a random order. Accumulate the frequency with which each active cell is associated with each digit label. After all images have been seen, convert the observed frequencies to correlations.

- Pass N+2: Predict label of test images.

Present each digit in the testing set once, in a random order. Use the correlations between cell activity and training labels to predict the most likely digit label given the set of active cells in selected Region-Layer components (they are arranged into a hierarchy).

Experiment 2: Image classification & sequence prediction

What if the digit images are not in a random order? We can use the English language to generate a training set of digit sequences. For example, we can get a book, convert each character to a 2 digit number and select random appropriate digit images to represent each number.The motivation for this experiment is to see how the sequence learning can boost image recognition: Our Region-Layer component is supposed to be able to integrate both sequential and spatial information. This experiment actually has a lot of depth because English isn’t entirely predictable - if we use a different book for testing, there’ll be lots of sub-sequences the algorithm has never observed before. There’ll be uncertainty in image appearance and uncertainty in sequence, and we’d like to see how a hierarchy of Region-Layer components responds to both. Our expectation is that it will improve digit classification performance beyond the random image case.

In the next article, we will describe the specifics of the algorithms we implemented and tested on these problems.

A final article will present some results.

Labels:

AGI

,

Experimental Framework

,

MNIST

,

unsupervised learning

Friday, 9 September 2016

The Region-Layer: A building block for AGI

Introducing the Region-Layer

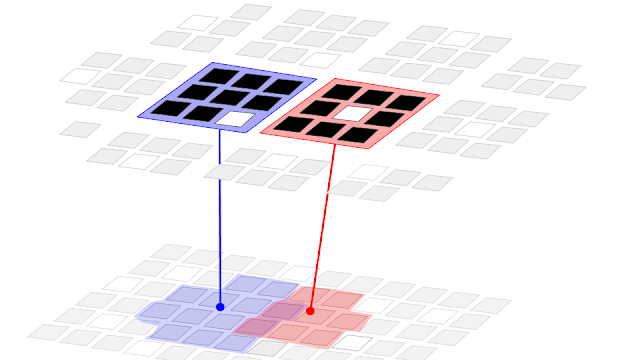

From our background reading (see here, here, or here) we believe that the key component of a general intelligence can be described as a structure of “Region-Layer” components. As the name suggests, these are finite 2-dimensional areas of cells on a surface. They are surrounded by other Region-Layers, which may be connected in a hierarchical manner; and can be sandwiched by other Region-Layers, on parallel surfaces, by which additional functionality can be achieved. For example, one Region-Layer could implement our concept of the Objective system, another the Region-Layer the Subjective system. Each Region-Layer approximates a single Layer within a Region of Cortex, part of one vertex or level in a hierarchy. For more explanation of this terminology, see earlier articles on Layers and Levels.

The Region-Layer has a biological analogue - it is intended to approximate the collective function of two cell populations within a single layer of a cortical macrocolumn. The first population is a set of pyramidal cells, which we believe perform a sparse classifier function of the input; the second population is a set of inhibitory interneuron cells, which we believe cause the pyramidal cells to become active only in particular sequential contexts, or only when selectively dis-inhibited for other purposes (e.g. attention). Neocortex layers 2/3 and 5 are specifically and individually the inspirations for this model: Each Region-Layer object is supposed to approximate the collective cellular behaviour of a patch of just one of these cortical layers.

We assume the Region-Layer is trained by unsupervised learning only - it finds structure in its input without caring about associated utility or rewards. Learning should be continuous and online, learning as an agent from experience. It should adapt to non-stationary input statistics at any time.

The Region-Layer should be self-organizing: Given a surface of Region-Layer components, they should arrange themselves into a hierarchy automatically. [We may defer implementation of this feature and initially implement a manually-defined hierarchy]. Within each Region-Layer component, the cell populations should exhibit a form of competitive learning such that all cells are used efficiently to model the variety of input observed.

We believe the function of the Region-Layer is best described by Jeff Hawkins: To find spatial features and predictable sequences in the input, and replace them with patterns of cell activity that are increasingly abstract and stable over time. Cumulative discovery of these features over many Region-Layers amounts to an incremental transformation from raw data to fully grounded but abstract symbols.

Within a Region-Layer, Cells are organized into Columns (see figure 1). Columns are organized within the Region-Layer to optimally cover the distribution of active input observed. Each Column and each Cell responds to only a fraction of the input. Via these two levels of self-organization, the set of active cells becomes a robust, distributed representation of the input.

Given these properties, a surface of Region-Layer components should have nice scaling characteristics, both in response to changing the size of individual Region-Layer column / cell populations and the number of Region-Layer components in the hierarchy. Adding more Region-Layer components should improve input modelling capabilities without any other changes to the system.

So let's put our cards on the table and test these ideas.

Region-Layer Implementation

Parameters

For the algorithm outlined below very few parameters are required. The few that are mentioned are needed merely to describe the resources available to the Region-Layer. In theory, they are not affected by the qualities of the input data. This is a key characteristic of a general intelligence.

- RW: Width of region layer in Columns

- RH: Height of region layer in Columns

- CW: Width of column in Cells

- CH: Height of column in Cells

Inputs and Outputs

- Feed-Forward Input (FFI): Must be sparse, and binary. Size: A matrix of any dimension*.

- Feed-Back Input (FBI): Sparse, binary Size: A vector of any dimension

- Prediction Disinhibition Input (PDI): Sparse, rare. Size: Region Area+

- Feed-Forward Output (FFO): Sparse, binary and distributed. Size: Region Area+

* the 2D shape of input[s] may be important for learning receptive fields of columns and cells, depending on implementation.

+ Region Area = CW * CH * RW * RH

Pseudocode

Here is some pseudocode for iterative update and training of a Region-Layer. Both occur simultaneously.

We also have fully working code. In the next few blog posts we will describe some of our concrete implementations of this algorithm, and the tests we have performed on it. Watch this space!

function: UpdateAndTrain(

feed_forward_input,

feed_back_input,

prediction_disinhibition

)

// if no active input, then do nothing

if( sum( input ) == 0 ) {

return

}

// Sparse activation

// Note: Can be implemented via a Quilt[1] of any competitive learning algorithm,

// e.g. Growing Neural Gas [2], Self-Organizing Maps [3], K-Sparse Autoencoder [4].

activity(t) = 0

for-each( column c ) {

// find cell x that most responds to FFI

// in current sequential context given:

// a) prior active cells in region

// b) feedback input.

x = findBestCellsInColumn( feed_forward_input, feed_back_input, c )

activity(t)[ x ] = 1

}

// Change detection

// if active cells in region unchanged, then do nothing

if( activity(t) == activity(t-1) ) {

return

}

// Update receptive fields to organize columns

trainReceptiveFields( feed_forward_input, columns )

// Update cell weights given column receptive fields

// and selected active cells

trainCells( feed_forward_input, feed_back_input, activity(t) )

// Predictive coding: output false-negative errors only [5]

for-each( cell x in region-layer ) {

coding = 0

if( ( activity(t)[x] == 1 ) and ( prediction(t-1)[x] == 0 ) ) {

coding = 1

}

// optional: mute output from region, for attentional gating of hierarchy

if( prediction_disinhibition(t)[x] == 0 ) {

coding = 0

}

output(t)[x] = coding

}

// Update prediction

// Note: Predictor can be as simple as first-order Hebbian learning.

// The prediction model is variable order due to the inclusion of sequential

// context in the active cell selection step.

trainPredictor( activity(t), activity(t-1) )

prediction(t) = predict( activity(t) )

[2] https://papers.nips.cc/paper/893-a-growing-neural-gas-network-learns-topologies.pdf

[3] http://www.cs.bham.ac.uk/~jxb/NN/l16.pdf

[4] https://arxiv.org/pdf/1312.5663

[5] http://www.ncbi.nlm.nih.gov/pubmed/10195184

Labels:

AGI

,

Artificial General Intelligence

,

columns

,

Hierarchical Generative Models

,

Predictive Coding

,

pyramidal cell

,

sparse coding

,

Sparse Distributed Representations

,

symbol grounding problem

Wednesday, 18 May 2016

Reading list - May 2016

|

| Digit classification error over time in our experiments. The image isn't very helpful but it's a hint as to why we're excited :) |

Project AGI

A few weeks ago we paused the "How to build a General Intelligence" series (part 1, part 2, part 3, part 4). We paused it because the next article in the series requires us to specify everything in detail, and we need working code to do that.We have been testing our algorithm on a variety of MNIST-derived handwritten digit datasets, to better understand how well it generalizes its representation of digit-images and how it behaves when exposed to varying degrees of predictability. Initial results look promising: We will post everything here once we've verified them and completed the first batch of proper experiments. The series will continue soon!

Deep Unsupervised Learning

Our algorithm is a type of Online Deep Unsupervised Learning, so naturally we're looking carefully at similar algorithms.We recommend this video of a talk by Andrew Ng. It starts with a good introduction to the methods and importance of feature representation and touches on types of automatic feature discovery. He looks at some of the important feature detectors in computer vision, such as SIFT and HoG and shows how feature detectors - such as edge detectors - can emerge from more general pattern recognition algorithms such as sparse coding. For more on sparse coding see Shakir's excellent machine learning blog.

For anyone struggling to intuit deep feature discovery, I also loved this post on yCombinator which nicely illustrates how and why deep networks discover useful features, and why the depth helps.

The latter part of the video covers Ng's latest work on deep hierarchical sparse coding using Deep Belief Networks, in turn based on AutoEncoders. He reports benchmark-beating results on video activity and phoneme recognition with this framework. You can find details of his deep unsupervised algorithm here:

http://deeplearning.stanford.edu/wiki

Finally he presents a plot suggesting that training dataset size is a more important determiner of eventual supervised network performance than algorithm choice! This is a fundamental limitation of supervised learning where the necessary training data is much more limited than in unsupervised learning (in the latter case, the real world provides a handy training set!)

|

| Effect of algorithm and training set size on accuracy. Training set size more significant. This is a fundamental limitation of supervised learning. |

Online K-sparse autoencoders (with some deep-ness)

We've also been reading this paper by Makhzani and Frey about deep online learning with auto-encoders (a type of supervised learning neural network that is used in an unsupervised way to reconstruct its input, often known as semi-supervised learning). Actually we've struggled to find any comparison of autoencoders to earlier methods of unsupervised learning both in terms of computational efficiency and ability to cover the search space effectively. Let us know if you find a paper that covers this.

The Makhzani paper has some interesting characteristics - the algorithm is online, which means it receives data as a stream rather than in batches. It is also sparse, which we believe is desirable from a representational perspective.

One limitation is that the solution is most likely unable to handle changes in input data statistics (i.e. non-stationary problems). The reason this is an important quality is that in any arbitrarily deep network the typical position of a vertex is between higher and lower vertices. If all vertices are continually learning, the problem being modelled by any single vertex is constantly changing. Therefore, intermediate vertices must be capable of online learning of non stationary problems otherwise the network will not be able to function effectively. In Makhzani and Frey, they instead use the greedy layerwise training approach from Deep Belief Networks. The authors describe this approach:

"4.6. Deep Supervised Learning Results The k-sparse autoencoder can be used as a building block of a deep neural network, using greedy layerwise pre-training (Bengio et al., 2007). We first train a shallow k-sparse autoencoder and obtain the hidden codes. We then fix the features and train another ksparse autoencoder on top of them to obtain another set of hidden codes. Then we use the parameters of these autoencoders to initialize a discriminative neural network with two hidden layers."

The limitation introduced can be thought of as an inability to escape from local minima that result from prior training. This paper by Choromanska et al tries to explain why this happens.

Greedy layerwise training is an attempt to work around the fact that deep belief networks of Autoencoders cannot effectively handle nonstationary problems.

For more information here's some papers on deep sparse networks built from autoencoders:

- http://web.stanford.edu/class/archive/cs/cs294a/cs294a.1104/sparseAutoencoder.pdf

- https://www.elen.ucl.ac.be/Proceedings/esann/esannpdf/es2010-73.pdf

- http://www.jmlr.org/proceedings/papers/v22/zhou12b/zhou12b.pdf

Variations on Supervised Learning - a Taxonomy

Back to supervised learning, and the limitation of training dataset size. Thanks to a discussion with Jay Chakravarty we have this brief taxonomy of supervised learning workarounds for insufficient training datasets:

Weakly supervised learning: [For poorly labelled training data] where you want to learn models for object recognition under weak supervision - you have say object labels for images, but no localization (e.g. bounding box) for the object in the image (there might be other objects in the image as well). You would use a Latent SVM to solve the problem of localizing the objects in the images, and at the same time learning a classifier for it.

Another example of weakly supervised learning is that you have a bag of positive samples mixed up with negative training samples, but also have a bag of purely negative samples - you would use Multiple Instance Learning for this.

Cross-modal adaptation: where one mode of data supervises another - e.g. audio supervises video or vice-versa.

Domain adaptation: model learnt on one set of data is adapted, in unsupervised fashion, to new datasets with slightly different data distributions.

Transfer learning: using the knowledge gained in learning one problem on a different, but related problem. Here's a good example of transfer learning, a finalist in the NVIDIA 2016 Global Impact Award. The system learns to predict poverty from day and night satellite images, with very few labelled samples.

Full paper:

http://arxiv.org/pdf/1510.00098v2.pdf

Interactive Brain Concept Map

We enjoyed this interactive map of the distribution of concepts within the cortex captured using fMRI and produced by the Gallant Lab (source papers here).Using the map you can find the voxels corresponding to various concepts, which although maybe not generalizable due to the small sample size (7) gives you a good idea of the hierarchical structure the brain has produced, and what the intermediate concepts represent.

Thanks to David Ray @ http://cortical.io for the link.

|

| Interactive brain concept map |

OpenAI Gym - Reinforcement Learning platform

We also follow the OpenAI project with interest. OpenAI have just released their "Gym" - a platform for training and testing reinforcement learning algorithms. Have a play with it here:https://openai.com/blog/openai-gym-beta/

According to Wired magazine, OpenAI will continue to release free and open source software (FOSS) for the wider impact this will have on uptake. There are many companies now competing to win market share in this space.

We're regular readers of this blog and have been meaning to mention it for months. Worth reading.

How the brain generates actions

A big gap in our knowledge is how the brain generates actions from its internal representation. This new paper by Vicente et al challenges the established (rather vague) dogma on how the brain generates actions.

“We found that contrary to common belief, the indirect pathway does not always prevent actions from being performed, it can actually reinforce the performance of actions. However, the indirect pathway promotes a different type of actions, habits.”

This is probably quite informative for reverse-engineering purposes. Full paper here.

Hierarchical Temporal Memory

HTM is an online method for feature discovery and representation and now we have a baseline result for HTM on the famous MNIST numerical digit classification problem. Since HTM works with time-series data, the paper compares HTM to LSTM (Long-Short-Term Memory), the leading supervised-learning approach to this problem domain.It is also interesting that the paper deals with adaptation to sudden changes in the input data statistics, the very problem that frustrates the deep belief networks described above.

Full paper by Cui et al here.

For a detailed mathematical description of HTM see this paper by Mnatzaganian and Kudithipudi.

Labels:

AGI

,

Artificial General Intelligence

,

deep belief networks

,

Reinforcement Learning

,

Software

,

sparse coding

,

Sparse Distributed Representations

,

stationary problem

,

unsupervised learning

Wednesday, 23 March 2016

Reading list: Assorted AGI links. March 2016

|

| A Minecraft API is now available to train your AGIs |

Our News

We are working hard on experiments, and software to run experiments. So this week there is no normal blog post. Instead, here’s an eclectic mix of links we’ve noticed recently.First, AlphaGo continues to make headlines. Of interest to Project AGI is Yann LeCun agreeing with us that unsupervised hierarchical modelling is an essential step in building intelligence with humanlike qualities [1]. We also note this IEEE Spectrum post by Jean-Christophe Baillie [2] which argues, as we did [3], that we need to start creating embodied agents.

Minecraft

Speaking of which, the BBC reports that the Minecraft team are preparing an API for machine learning researchers to test their algorithms in the famous game [4]. The Minecraft team also stress the value of embodied agents and the depth of gameplay and graphics. It sounds like Minecraft could be a crucial testbed for an AGI. We’re always on the lookout for test problems like these.

Of course, to play Minecraft well you need to balance local activities - building, mining etc. - with exploration. Another frontier, beyond AlphaGo, is exploration. Monte-Carlo Tree Search (as used in AlphaGo) explores in more limited ways than humans do, argues John Langford [5].

Of course, to play Minecraft well you need to balance local activities - building, mining etc. - with exploration. Another frontier, beyond AlphaGo, is exploration. Monte-Carlo Tree Search (as used in AlphaGo) explores in more limited ways than humans do, argues John Langford [5].

Sharing places with robots

If robots are going to be embodied, we need to make some changes. Wired magazine says that a few small changes to the urban environment and driver behaviour will make the rollout of autonomous vehicles easier [6]. It’s important to meet the machines halfway, for the benefit of all.

This excellent paper on robotic grasping also caught our attention [7]. A key challenge in this area is adaptability to slightly varying circumstances, such as variations in the objects being grasped and their pose relative the the arm. General solutions to these problems will suddenly make robots far more flexible and applicable to a greater range of tasks.

This excellent paper on robotic grasping also caught our attention [7]. A key challenge in this area is adaptability to slightly varying circumstances, such as variations in the objects being grasped and their pose relative the the arm. General solutions to these problems will suddenly make robots far more flexible and applicable to a greater range of tasks.

Hierarchical Quilted Self-Organizing Maps & Distributed Representations

Last week I also rediscovered this older paper on Hierarchical-Quilted Self-Organizing Maps (HQSOMs) [8].This is close to our hearts because we originally believed this type of representation was the right approach for AGI. With the success of Deep Convolutional Networks (DCNs) it’s worth looking back and noticing the similarities between the two. While HQSOM is purely unsupervised learning, (a plus, see comment from Yann LeCun above) DCNs are trained by supervised techniques. However, both methods use small, overlapping, independent units - analogous to biological cortical columns - to classify different patches of the input. The overlapping and independent classifiers lead to robust and distributed representations, which is probably the reason these methods work so well.Distributed representation is one of the key features of Hawkins’ Hierarchical Temporal Memory (HTM). Fergal Byrne has recently published an updated description of the HTM algorithm [9] for those interested.

We at Project AGI believe that a grid-like “region” of columns employing a “Winner-Take-All” policy [10], with overlapping input receptive fields, can produce a distributed representation. Different regions are then connected together into a tree-like structure (acyclic). The result is a hierarchy. Not only does this resemble the state-of-the-art methods of DCNs, but there’s a lot of biological evidence for this type of representation too. This paper by Rinkus [11] describes columnar features arranged into a hierarchy, with winner-take-all behaviour implemented via local inhibition.

Rinkus says: “Saying only that a group of L2/3 units forms a WTA CM places no a priori constraints on what their tuning functions or receptive fields should look like. This is what gives that functionality a chance of being truly generic, i.e., of applying across all areas and species, regardless of the observed tuning profiles of closely neighboring units.”

Reinforcement Learning

But unsupervised learning can’t be the only form of learning. We also need to consider consequences, and so we need reinforcement learning to take account of these. As Yann said, the “cherry on the cake” (this is probably understating the difficulty of the RL component, but right now it seems easier than creating representations).Shakir’s Machine Learning blog has a great post exploring the biology of reinforcement learning [12] within the brain. This is a good overview of the topic and useful for ML researchers wanting to access this area.

But regular readers of this blog will remember that we’re obsessed with unfolding or inverting abstract plans into concrete actions. We found a great paper by Manita et al [13] that shows biological evidence for the translation and propagation of an abstract concept into sensory and motor areas, where it can assist with perception. This is the hierarchy in action.

Long-Short-Term Memory (LSTM)

One more tack before we finish. Thanks to Jay for this link to NVIDIA’s description of LSTMs [14], an architecture for recurrent neural networks (i.e. the state can depend on the previous state of the cells). It’s a good introduction, but we’re still fans of Monner’s Generalized LSTM [15].Fun thoughts

Now let’s end with something fun. Wired magazine again, describing watching AlphaGo as our first taste of a superhuman intelligence [16]. Although this is a “narrow” intelligence, not a general one, it has qualities beyond anything we’ve experienced in this domain before. What’s more, watching these machines can make us humans better, without any nasty bio-engineering:“But as hard as it was for Fan Hui to lose back in October and have the loss reported across the globe—and as hard as it has been to watch Lee Sedol’s struggles—his primary emotion isn’t sadness. As he played match after match with AlphaGo over the past five months, he watched the machine improve. But he also watched himself improve. The experience has, quite literally, changed the way he views the game. When he first played the Google machine, he was ranked 633rd in the world. Now, he is up into the 300s. In the months since October, AlphaGo has taught him, a human, to be a better player. He sees things he didn’t see before. And that makes him happy. “So beautiful,” he says. “So beautiful.”

References

[1] https://www.facebook.com/yann.lecun/posts/10153426023477143[2] http://spectrum.ieee.org/automaton/robotics/artificial-intelligence/why-alphago-is-not-ai

[3] http://blog.agi.io/2016/03/what-after-alphago.html

[4] http://www.bbc.com/news/technology-35778288

[5] http://cacm.acm.org/blogs/blog-cacm/199663-alphago-is-not-the-solution-to-ai/fulltext

[6] http://www.wired.com/2016/03/self-driving-cars-wont-work-change-roads-attitudes/

[7] http://arxiv.org/pdf/1603.02199v1.pdf

[8] http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.84.1401&rep=rep1&type=pdf

[9] http://arxiv.org/pdf/1509.08255v2.pdf

[10] https://en.wikipedia.org/wiki/Winner-take-all_(computing)

[11] http://journal.frontiersin.org/article/10.3389/fnana.2010.00017/full

[12] http://blog.shakirm.com/2016/02/learning-in-brains-and-machines-1/

[13] https://www.researchgate.net/profile/Masanori_Murayama/publication/277144323_A_Top-Down_Cortical_Circuit_for_Accurate_Sensory_Perception/links/556839e008aec22683011a30.pdf

[14] https://devblogs.nvidia.com/parallelforall/deep-learning-nutshell-sequence-learning/

[15] http://www.overcomplete.net/papers/nn2012.pdf

[16] http://www.wired.com/2016/03/sadness-beauty-watching-googles-ai-play-go/

Labels:

AGI

,

AlphaGo

,

Artificial General Intelligence

,

deep convolutional networks

,

HQSOM

,

machine learning

,

unsupervised learning

Thursday, 10 March 2016

What's after AlphaGo?

What's AlphaGo?

AlphaGo is a system that can play Go at least as well as the best humans. Go was widely cited as the hardest (and only remaining) game at which humans could beat machines, so this is a big deal. AlphaGo has just defeated a top-ranked human expert.

- AlphaGo Nature paper (Silver et al 2016)

Why is Go hard?

Go is hard because the search-space of possible moves is so large that tree search and pruning techniques, such as those used to beat humans at Chess, won't work - or at least, they won't work well enough, with a feasible amount of memory, to play Go better than the best humans.

Instead, to play Go well, you need to have "intuition" rather than brute search power: To look at the board and spot local (or gross) patterns that represent opportunities or dangers. And in fact, AlphaGo is able to play in this way. It beat the next best computer algorithm "Pachi" 85% of the time without any tree search - just predicting the best action based on its interpretation of the current state. The authors of the AlphaGo Nature paper say:

“During the match against Fan Hui, AlphaGo evaluated thousands of times fewer positions than Deep Blue did in its chess match against Kasparov; compensating by selecting those positions more intelligently, using the policy network, and evaluating them more precisely, using the value network—an approach that is perhaps closer to how humans play.”

“During the match against Fan Hui, AlphaGo evaluated thousands of times fewer positions than Deep Blue did in its chess match against Kasparov; compensating by selecting those positions more intelligently, using the policy network, and evaluating them more precisely, using the value network—an approach that is perhaps closer to how humans play.”

How does AlphaGo work?

AlphaGo is trained by both supervised and reinforcement learning. Supervised learning feedback comes from recordings of moves in expert games. However, these are finite in size and used naively, would lead to overfitting.

Instead, in AlphaGo a Supervised Learning deep neural network learns to model and predict expert behaviour in the recorded games, via conventional deep learning techniques. Then, a reinforcement learning network is used to generate reward data for novel games that AlphaGo plays against itself! This mitigates the limited size of the supervised learning dataset.

Of course, AlphaGo also wants the play better than the best play observed in the training data. To achieve this, the reinforcement learning network is further trained by playing pairs of them (networks) against each other - mixing the pairs up to prevent policies overfitting each other. This is a really clever feature because it allows AlphaGo to go beyond its training data.

Note also that the neural networks cannot possibly fully represent a sufficiently deep tree of board outcomes within their limited set of weights. Instead, the network has to learn to represent good and bad situations with limited resources. It has to form its own representation of the most salient features, during training.

The neural networks function without pre-defined rules specific to Go; instead they have learned from training data collected from many thousands of human and simulated games.

Key advances

AlphaGo is an important advance because it is able to make good judgments about play situations based on a lossy interpretation in a finitely-sized deep neural network.

What’s more, Go wasn’t simply taught to copy human experts - it went further, and improved, by playing against itself.

What’s more, Go wasn’t simply taught to copy human experts - it went further, and improved, by playing against itself.

So, what doesn't it do?

The techniques used in deep neural networks have recently been scaled to work effectively on a wide range of problems. In some subject areas, narrow AIs are reaching superhuman performance. However, it is not clear that these techniques will scale indefinitely. Problems such as vanishing gradients have been pushed back, but not necessarily eliminated.

Much greater scale is needed to get intelligent agents into the real world without them being immediately smashed by cars or stuck in holes. But already, it is time to consider what features or characteristics constitute an artificial general intelligence (AGI), beyond raw intelligence (which AIs now have).

AlphaGo isn't a general intelligence; it's designed specifically to play Go. Sure, it's trained rather than programmed manually, but it was designed for this purpose. The same techniques are likely to generalize to many other problems, but they'll need to be applied thoughtfully and retrained.

AlphaGo isn't an Agent. It doesn't have any sense of self, or intent, and its behaviour is pretty static - its policies would probably work the same way in all similar situations, learning only very slowly. You could say that it doesn't have moods, or other transient biases. Maybe this is a good thing! But this also limits its ability to respond to dynamic situations.

AlphaGo doesn't have any desire to explore, to seek novelty or to try different things. AlphaGo couldn't ever choose to teach itself to play Go because it found it interesting. On the other hand, AlphaGo did teach itself to play Go…

All in all, it's a very exciting time to study artificial intelligence!

by David Rawlinson & Gideon Kowadlo

Labels:

AGI

,

AlphaGo

,

Deep Learning

,

Go

,

Reinforcement Learning

Tuesday, 9 February 2016

Some interesting finds: Acyclic hierarchical modelling and sequence unfolding

This week we have a couple of interesting links to share.

From our experiments with generative hierarchical models, we claimed that the model produced by feed-forward processing should not have loops. Now we have discovered a paper by Bengio et al titled "Towards biologically plausible deep learning" [1] that supports this claim. The paper looks for biological mechanisms that mimic key features of deep learning. Probably the credit assignment problem is the most difficult feature to substantiate - ensuring each weight is updated correctly in response to its contribution to the overall output of the network - but the paper does leave me thinking it's plausible.

Anyway the reason I'm talking about it is this quote:

"There is strong biological evidence of a distinct pattern of connectivity between cortical areas that distinguishes between “feedforward” and “feedback” connections (Douglas et al., 1989) at the level of the microcircuit of cortex (i.e., feedforward and feedback connections do not land in the same type of cells). Furthermore, the feedforward connections form a directed acyclic graph with nodes (areas) updated in a particular order, e.g., in the visual cortex (Felleman and Essen, 1991)."

This says that the feedforward modelling process (which we believe is constructing a hierarchical model) is a directed acyclic graph (DAG) - which means it does not have loops, as we predicted. Secondly, it is another source claiming that the representation produced is hierarchical (in this case, a DAG). The cited work is a much older paper - "Distributed hierarchical processing in the primate cerebral cortex" [2]. We're still reading, but there's a lot of good background information here.

The second item to look at this week is a demo by Felix Andrews featuring temporal pooling [3] and sequence unfolding. "Unfolding" means transforming the pooled sequence representation back into its constituent parts - i.e. turning a sequence into a series of steps.

Felix demonstrates that high-level sequence selection can successfully be used to track and predict through observation of the corresponding lower-level sequence. This is achieved by causing the high-level sequence to predict all steps, and then tracking through the predicted sequence using first-order predictions in the lower level. Both levels are necessary - the high level prediction provides guidance for the low-level to ensure it predicts correctly through forks. The low level prediction keeps track of what's next in the sequence.

[1] "Towards Biologically Plausible Deep Learning" Yoshua Bengio, Dong-Hyun Lee, Jorg Bornschein and Zhouhan Lin (2015) http://arxiv.org/pdf/1502.04156v2.pdf

[2] "Distributed hierarchical processing in the primate cerebral cortex" Felleman DJ, Van Essen DC (1991) http://www.ncbi.nlm.nih.gov/pubmed/1822724

[3] Felix Andrews HTM temporal pooling and sequence unfolding demo http://viewer.gorilla-repl.org/view.html?source=gist&id=95da4401dc7293e02df3&filename=seq-replay.clj

From our experiments with generative hierarchical models, we claimed that the model produced by feed-forward processing should not have loops. Now we have discovered a paper by Bengio et al titled "Towards biologically plausible deep learning" [1] that supports this claim. The paper looks for biological mechanisms that mimic key features of deep learning. Probably the credit assignment problem is the most difficult feature to substantiate - ensuring each weight is updated correctly in response to its contribution to the overall output of the network - but the paper does leave me thinking it's plausible.

Anyway the reason I'm talking about it is this quote:

"There is strong biological evidence of a distinct pattern of connectivity between cortical areas that distinguishes between “feedforward” and “feedback” connections (Douglas et al., 1989) at the level of the microcircuit of cortex (i.e., feedforward and feedback connections do not land in the same type of cells). Furthermore, the feedforward connections form a directed acyclic graph with nodes (areas) updated in a particular order, e.g., in the visual cortex (Felleman and Essen, 1991)."

This says that the feedforward modelling process (which we believe is constructing a hierarchical model) is a directed acyclic graph (DAG) - which means it does not have loops, as we predicted. Secondly, it is another source claiming that the representation produced is hierarchical (in this case, a DAG). The cited work is a much older paper - "Distributed hierarchical processing in the primate cerebral cortex" [2]. We're still reading, but there's a lot of good background information here.

The second item to look at this week is a demo by Felix Andrews featuring temporal pooling [3] and sequence unfolding. "Unfolding" means transforming the pooled sequence representation back into its constituent parts - i.e. turning a sequence into a series of steps.

Felix demonstrates that high-level sequence selection can successfully be used to track and predict through observation of the corresponding lower-level sequence. This is achieved by causing the high-level sequence to predict all steps, and then tracking through the predicted sequence using first-order predictions in the lower level. Both levels are necessary - the high level prediction provides guidance for the low-level to ensure it predicts correctly through forks. The low level prediction keeps track of what's next in the sequence.

[1] "Towards Biologically Plausible Deep Learning" Yoshua Bengio, Dong-Hyun Lee, Jorg Bornschein and Zhouhan Lin (2015) http://arxiv.org/pdf/1502.04156v2.pdf

[2] "Distributed hierarchical processing in the primate cerebral cortex" Felleman DJ, Van Essen DC (1991) http://www.ncbi.nlm.nih.gov/pubmed/1822724

[3] Felix Andrews HTM temporal pooling and sequence unfolding demo http://viewer.gorilla-repl.org/view.html?source=gist&id=95da4401dc7293e02df3&filename=seq-replay.clj

Labels:

Deep Learning

,

directed acyclic graph

,

Felix Andrews

,

sequence unfolding

,

Temporal Pooling

Subscribe to:

Posts

(

Atom

)